Engineering leaders and QA managers often spend hours combing through logs, dashboards, and spreadsheets to answer simple questions about software release health. But here is the unfortunate reality: modern software delivery lifecycle produces an abundance of test data, but without the right tools and strategy, it yields few actionable insights.

This guide provides a framework for effective software testing prompts to help make the most out of your test data. While this guide was designed to provide tips on using Sauce AI for Insights, these tips apply to any type of software testing prompt writing. Whether you are an engineering director looking for strategic visibility or a QA manager ensuring daily test health, these patterns and tips will help you extract maximum value from your testing data.

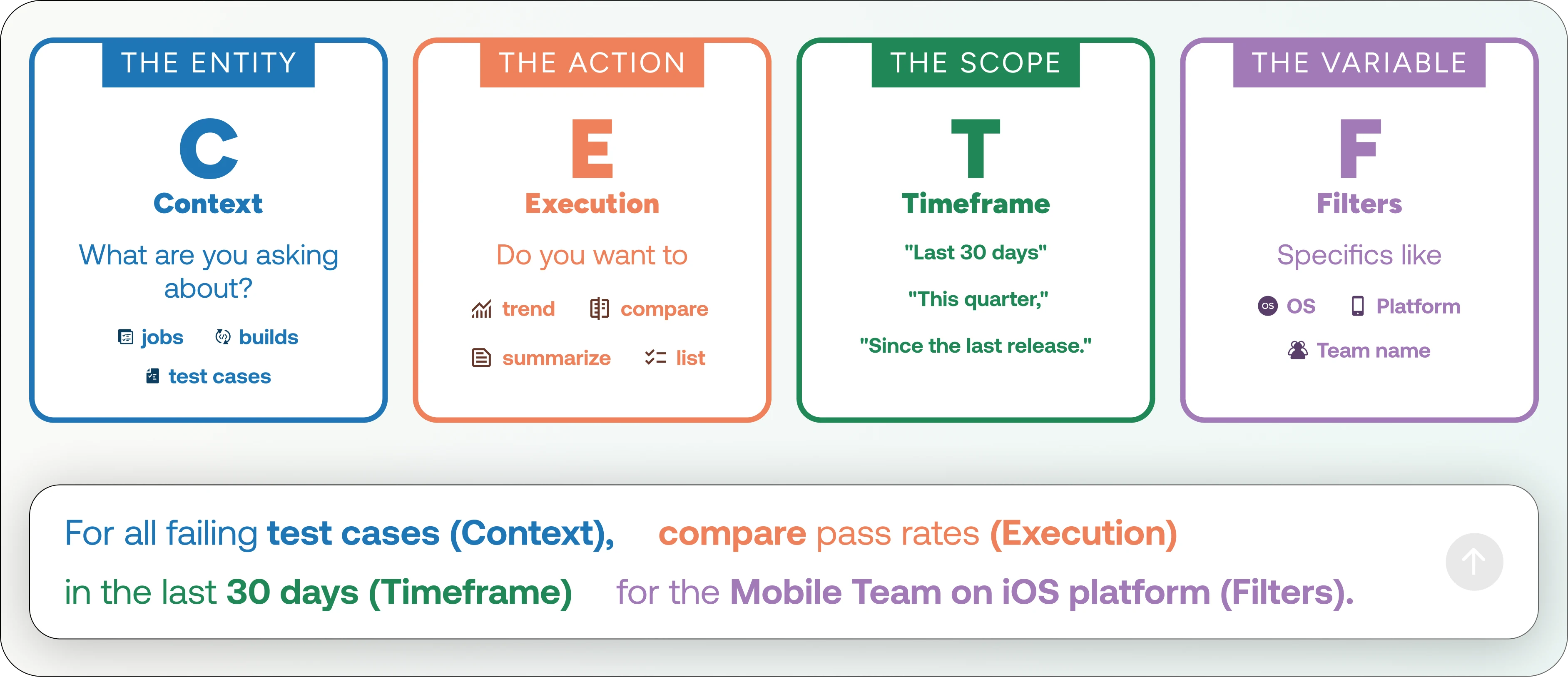

Let’s put this framework into a simple hypothetical example below:

Vague prompt: "Show me the latest test failures."

Better prompt: "Compare pass rates between Android and iOS (Filter) for the last 90 days (Timeframe) to identify disparity in quality (Context/Goal)."

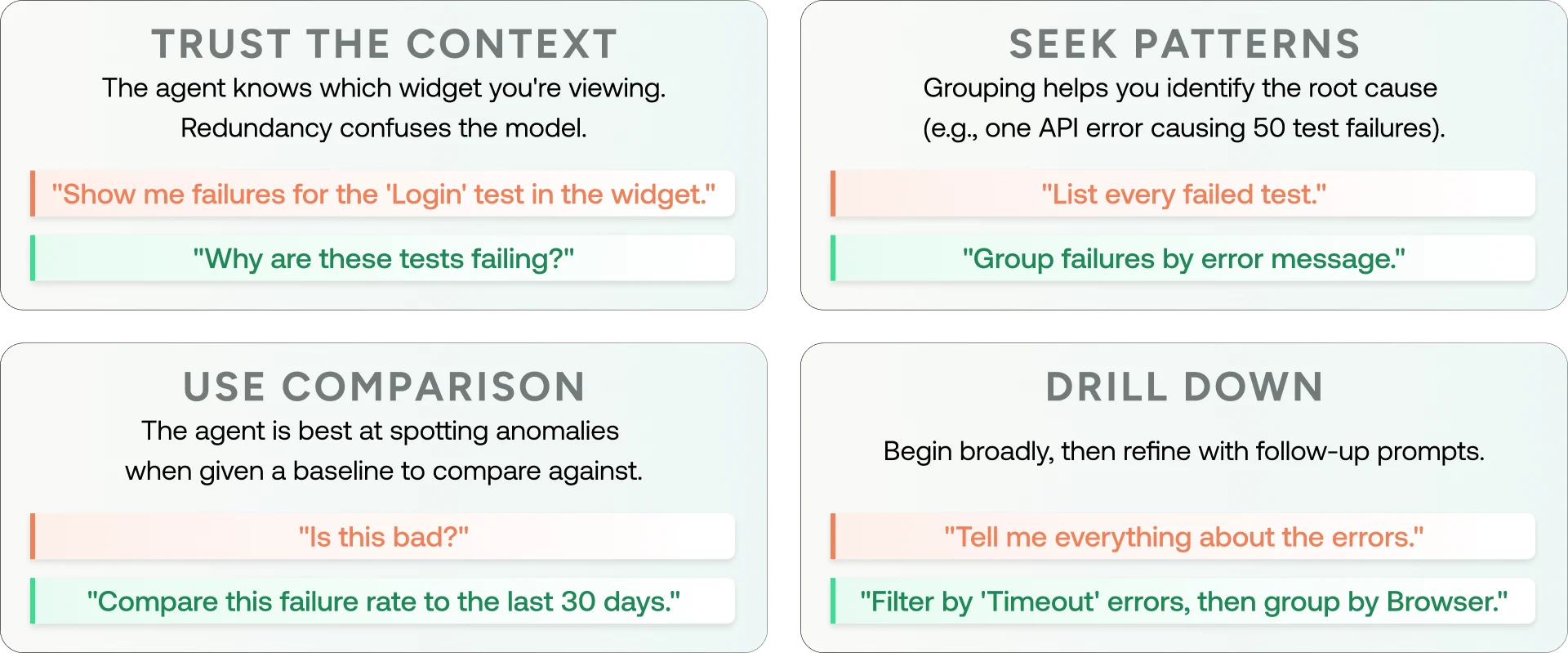

If you are using Sauce AI for Insights, adding the additional principles will also help you get the most useful outputs:

If you’d like to drill down into optimizing Sauce AI for Insights usage, be sure to read the product-specific prompting guide found within the Sauce Labs documentation.

Now that we’ve established what context your prompt should include, let’s explore prompt optimization based on your role.

Prompting for engineering directors: Optimizing for strategy and speed

Goal: Strategic visibility and delivery performance

Engineering directors should not be bogged down in individual test logs. Instead, they must optimize for macro patterns, cross-team velocity, and release risk. The end goal is to direct resources to areas with the greatest impact on delivery.

Key prompt patterns for software testing directors

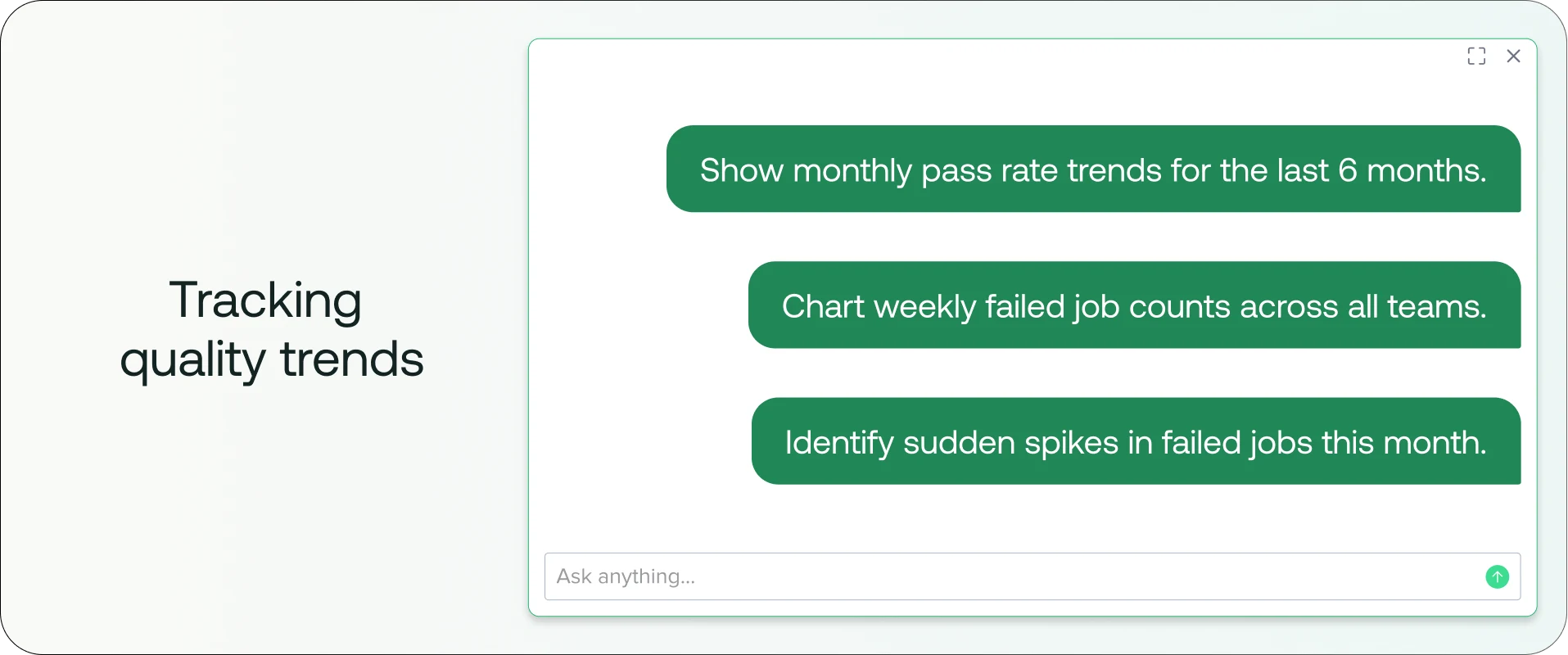

1. Tracking quality trends

The insight: Trend analysis highlights systemic risk rather than just tactical failures.

Some baseline prompts that you can adjust to your particular scenario and data:

The prompts:

Show monthly pass rate trends for the last 6 months.

Chart weekly failed job counts across all teams.

Identify sudden spikes in failed jobs this month.

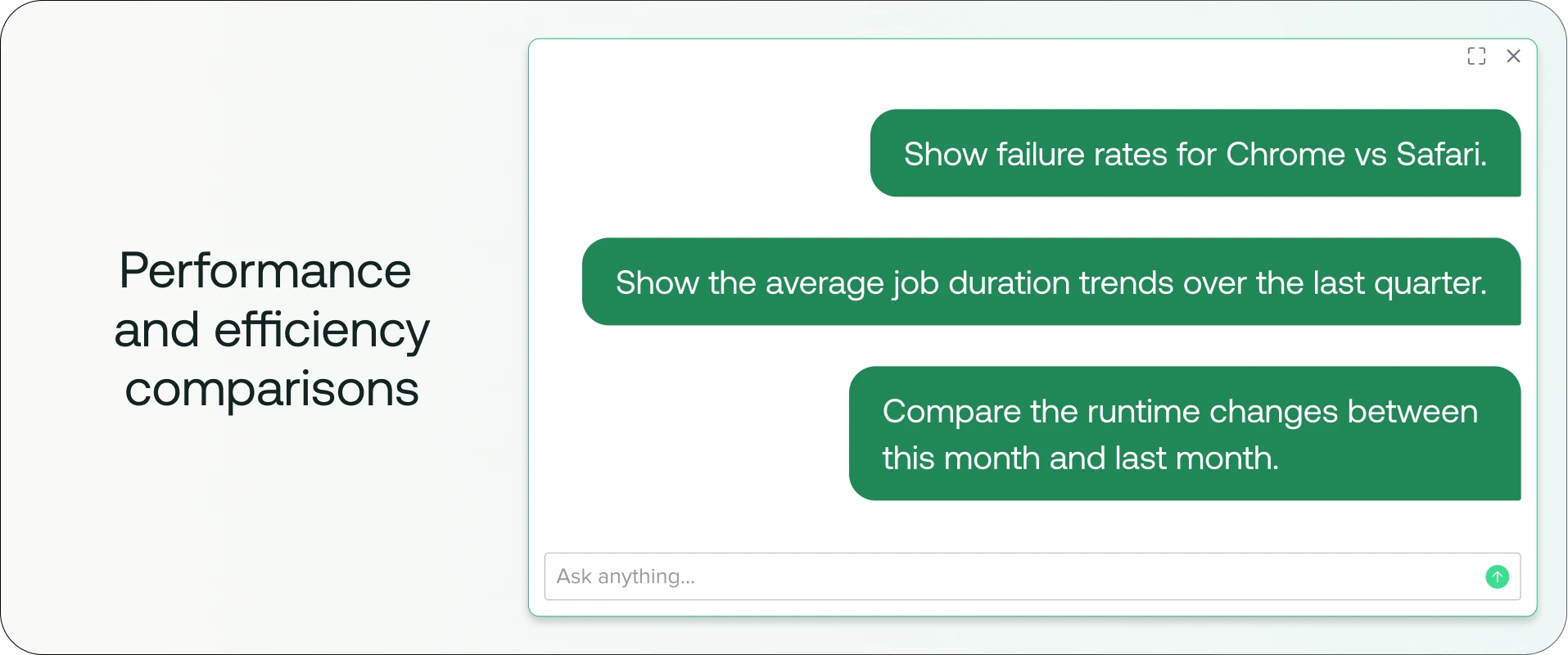

2. Performance and efficiency comparisons

The Insight: Cross-team and cross-platform comparisons reveal disparity in execution effectiveness and help identify where investment is needed.

The prompts:

Show failure rates for Chrome vs Safari.

Show the average job duration trends over the last quarter.

Compare the runtime changes between this month and last month.

3. Evaluating release risk

The insight: Catching declining quality before a release prevents costly rollbacks. These prompts help anticipate instability before it reaches production.

The prompts:

List builds with failure rates above 20% in the last 30 days.

Show builds with declining pass rates over time.

The more you prompt Sauce AI, the better it will get at context, details, and ultimately value. These prompts help you get started and are ripe for customization as you continue to evolve your prompting strategy.

Prompting guide for QA managers: Optimizing for stability and day-to-day operations

Goal: Operational clarity and daily test health

While engineering directors focus on longer time horizons, QA managers are responsible for day-to-day efforts. They need to focus on reducing flakiness, monitoring coverage gaps, and shortening feedback loops by identifying any regression and reliability issues as quickly as possible.

Key prompt patterns for QA managers

1. Stability monitoring

The Insight: Surface instability before it becomes a release blocker by tracking daily execution patterns.

The Prompts:

Show the daily pass/fail trend over the last 30 days.

Compare this week's pass rate to last week's.

Chart flaky test cases detected in the last 60 days.

2. Flakiness and persistent failures

The Insight: A flaky test is worse than no test—it erodes confidence. Managers must isolate recurring issues from one-off noise.

The Prompts:

Which test cases failed more than 3 times in the last 14 days?

Compare flaky vs stable test case counts by platform.

List consistently failing iOS test cases from the past month.

3. Root cause investigation

The Insight: Use specific identifiers to move from detection to diagnosis instantly.

The Prompts:

Why did job [Job ID] fail?

Analyze differences between passing and failing runs of build [Build Name].

What changed between successful and failed runs?

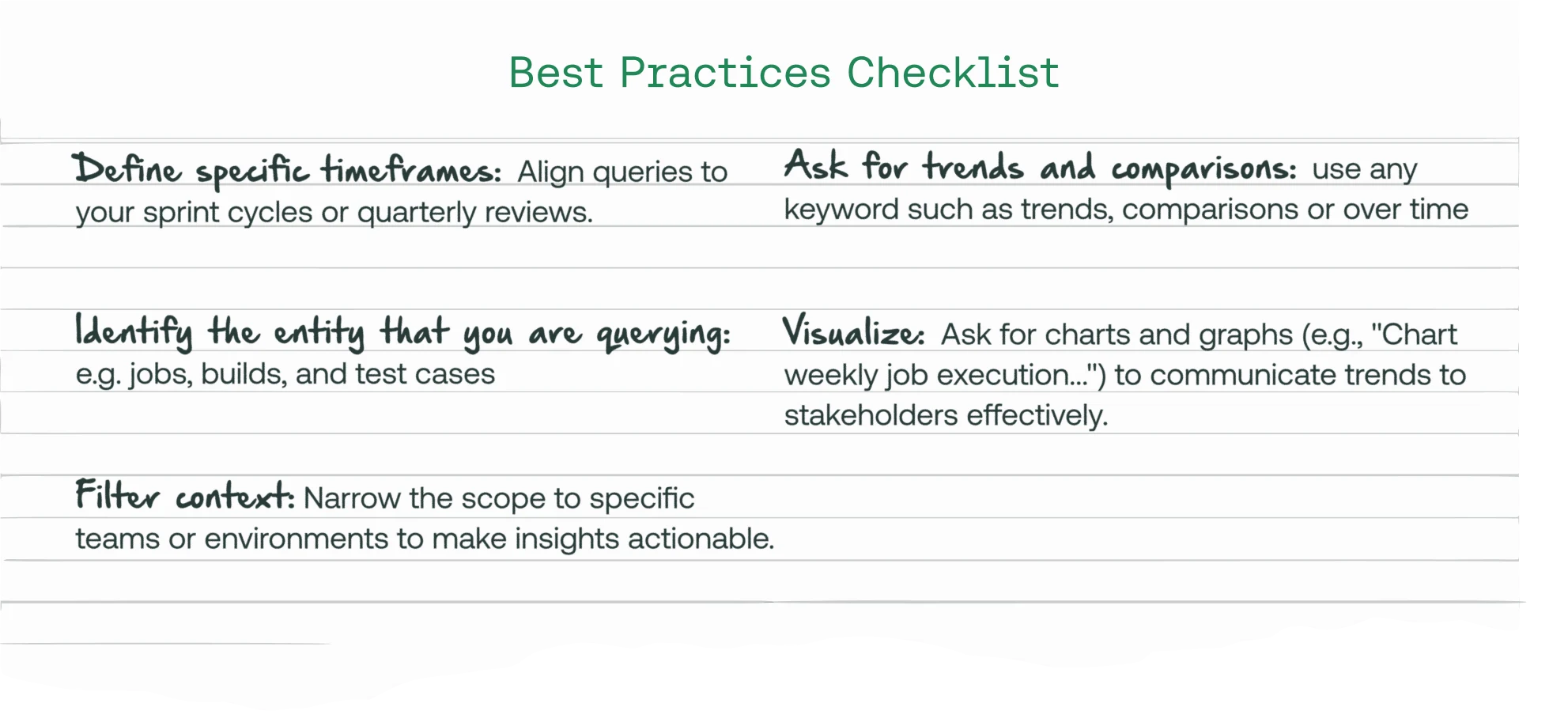

Best practices checklist for software testing prompting

To ensure you get the best results from your AI queries, follow the baseline checklist when writing and refining your prompts:

Define specific timeframes: Align queries to your sprint cycles or quarterly reviews.

Clearly identify the entity that you are querying: e.g. jobs, builds, and test cases

Filter context: Narrow the scope to specific teams or environments to make insights actionable.

Ask for trends and comparisons: use any keyword such as trends, comparisons or over time

Visualize: Ask for charts and graphs (e.g., "Chart weekly job execution...") to communicate trends to stakeholders effectively.

By mastering these prompt patterns, engineering organizations can shift from reactive debugging to proactive quality management, enabling faster delivery with greater confidence.

Takeaways

While software testing tools offer AI-powered features such as test authoring, Sauce Labs is one of the only software testing platform that provides AI-powered insights into release health. Instead of manually searching, Sauce Labs for Insights lets users query in natural language to quickly surface macro patterns, diagnose root causes, and anticipate release risks. But, like any AI product, the quality of the insight depends entirely on the prompt.

The true value of AI in testing is its ability to facilitate a shift from reactive troubleshooting to proactive quality management. To see Sauce AI for Insights in action, head over to our interactive demo. And if you’d like to talk to a testing expert about incorporating AI-driven insights into your software testing program, schedule a live chat and demo today.